Authors: Pablo Pérez (Nokia XR Lab, Madrid, Spain), Francois Blouin (Meta)

Editors: Tobias Hoßfeld (University of Würzburg, Germany), Christian Timmerer (Alpen-Adria-Universität Klagenfurt and Bitmovin Inc., Austria)

To the viewer, a stalled video is a single interruption; operationally, it fragments into different pieces of evidence. The streaming application records a bitrate drop and a rebuffering event, the network operator’s dashboard shows a congested access segment and a burst of packet loss, and the user experience sits between those two partial accounts.

This gap in visibility is the practical problem behind VQEG’s March 2026 white paper Quality of Experience-Aware Management for Collaboration Between Network and Application Providers, published as technical report VQEG_TR_2026_001 [VQEG, 2026]. Its guiding question is direct but difficult: if Content and Application Providers (CAPs) and Communication Service Providers (CSPs) both shape end-user Quality of Experience (QoE), what would they need to share in order to manage it together?

The Case for a Common QoS/QoE Vision

VQEG, the Video Quality Experts Group, is an international forum focused on video quality and QoE measurement. The VQEG 5G-KPI working group studies the relationship between network key performance indicators (KPIs), initially in 5G and extensible to other networks, and the QoE of video services running on top of them. At a July 2024 workshop in Klagenfurt, Austria, the group turned that broad mission into a more concrete agenda around a familiar problem in multimedia delivery: applications and networks are deeply entangled, yet the communities operating them often describe performance in different measurements.

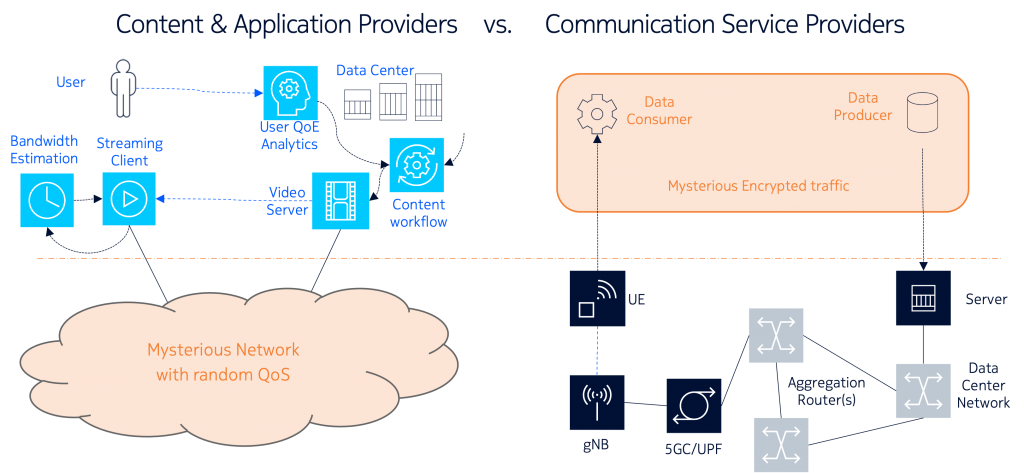

CAPs can typically observe the application layer: startup time, player state, bitrate switches, rebuffering, device behavior, error logs, and user-facing quality. However, they have no visibility on the network topology or performance, and they need to devote significant effort in developing throughput estimation algorithms and control techniques to adapt to varying network conditions. CSPs, in turn, can observe the network layer: throughput, latency, jitter, packet loss, routing, congestion, and radio or access-network conditions. However, they have no visibility on the kind of traffic they transport, especially when it is encrypted, and therefore they have difficulties in properly dimensioning the network or provided focused support to customer problems. Figure 1 illustrates this split visibility across the delivery chain and the shared view that QoE-aware management tries to build between CAPs and CSPs.

The same divergence appears in the terminology. In multimedia research, QoE is usually anchored in the user’s experience, following the widely used Qualinet definition of QoE [Qualinet, 2013] as “the degree of delight or annoyance of the user of an application or service,” shaped by the user’s personality, state, expectations, context, and the system itself. This definition keeps the human being in the picture: a model may estimate QoE, and a subjective test may measure it more directly, but the object of interest remains experienced quality.

In networking practice, the starting point is usually Quality of Service (QoS). Metrics such as bandwidth, latency, jitter, and packet loss are measurable, operational, and essential, although they tell only part of the story. A network policy that reduces bitrate may ease congestion and improve latency while the viewer sees a picture that has become visibly worse; a cloud-gaming session may report decent throughput while the player feels the input delay immediately. QoS counters become much more useful when they can be connected to application-level Key Quality Indicators (KQIs), modeled QoE, user-reported QoE, and system QoE [Hoßfeld, 2019].

From the Klagenfurt discussions emerged four needs: a shared vocabulary for QoS and QoE-related concepts; a practical way to model QoS-QoE relationships; requirements for exchanging information between CAPs and CSPs; and early validation through concrete use cases. Before any mechanism could be specified, the group first had to make the language precise enough for both sides to use.

Writing the White Paper

For the organizations that would actually have to operate QoE management, the resulting report frames the problem in practical terms. Which metrics can CAPs and CSPs agree on? Which ones can they expose to one another? How should those measurements be interpreted? When can they trigger action during a session, and when are they more useful afterward for analytics, troubleshooting, or network dimensioning?

The focus on CAPs and CSPs is deliberate because these stakeholders control different parts of the delivery chain. CAPs provide the application or content experience directly to users, while CSPs provide the communication network on which that experience depends. Between them sit devices, access networks, transport networks, content delivery networks, adaptation algorithms, service policies, and business constraints. A video freeze, a cloud-gaming delay, or a broken conference call may involve several of those layers at once, which gives a shared operating language practical value.

Because the contributors come from CAPs, CSPs, equipment vendors, universities, research institutes, and independent QoE experts, the report also reflects a range of operational and research perspectives. The mix includes, among others, Nokia, Meta, YouTube, RISE, Telefónica, AT&T, Ericsson, TikTok, Audible, AVEQ, RWTH Aachen, TU Ilmenau, the University of Padova, the University of Würzburg, Blekinge Institute of Technology, AGH, and Universidad Politécnica de Madrid. That breadth is important: different communities brought different habits, constraints, and preferred measurements into the same conversation.

Published as VQEG_TR_2026_001 in March 2026, the report reviews definitions, QoE models, and relevant standards; organizes the relationships among QoS, KPIs, KQIs, and QoE; CAP-CSP collaboration challenge; and proposes a conceptual framework for exchanging QoS- and QoE-related information. Its examples include short-form video, long-form video, cloud gaming, and video conferencing. Although the scope is broad, the center of gravity remains practical: give the ecosystem a common foundation before arguing about protocols or product-specific tools.

Some Highlights

One of the report’s central contributions is its layered vocabulary. Borrowing the intuition of a networking stack, while still keeping user experience above packet-level quantities, it separates network KPIs such as throughput, latency, and loss [3GPP, 2024] from application KQIs [3GPP QMC, 2025] such as startup delay, rebuffering, media quality, interaction delay, and session stability. Above those layers sits QoE: the user’s perceived quality, whether reported directly or inferred through a model. Terms such as user-reported QoE, modeled QoE, and system QoE receive explicit treatment because collaboration breaks down quickly when a “quality score” means one thing in the player logs and another thing in the network dashboard.

The layered vocabulary also changes the diagnostic problem. A CAP may know that a session suffered repeated stalls and bitrate drops, while the CSP may know that the access link was congested at the same time. If those observations can be correlated, both sides can move from guessing to diagnosis: application issue, network congestion, device limitation, or some interaction among them. Better diagnosis can help a CSP decide where network action is warranted, help a CAP adapt more intelligently, and help both sides avoid optimizations that improve a local metric while leaving the user unhappy.

The shared state table is the report’s most concrete proposal: a logical view where CAPs and CSPs exchange selected metrics, at appropriate time scales and levels of aggregation, so each side can understand enough of the other’s state to act. For video streaming, such a table might include application-side information such as startup delay, rebuffering, selected representation, or estimated visual quality, alongside network-side information such as congestion indicators or available capacity. For interactive services, the useful signals may shift toward latency, jitter, and responsiveness.

Flexibility is built into the proposal because different services need different metrics, and the useful time scale for a live video call differs from the time scale for post-session analytics. The difficult questions are the operational ones: who can measure a signal reliably, who can act on it, how granular the exchange should be, and how privacy or business constraints shape what can be exposed. Metric sharing remains voluntary and opt-in, with mechanisms such as temporary or pseudonymized session identifiers when granular traffic correlation is needed.

What is next?

Seen in this light, the report functions as a foundation for implementation work. It offers a vocabulary, a framework, and a set of open tasks that still need to be made operational. A sensible next step would be to choose one use case and make the model concrete by selecting the relevant metrics, deciding who measures them, defining how they map to QoE, and testing whether the information would actually help CAPs and CSPs make better decisions.

A proof of concept, controlled testbed, or simulation, which is currently under discussion in VQEG, could then address the focused feasibility questions. Can the selected metrics be measured reliably? How fast must they be shared? Does per-session information add enough value to justify the complexity? Which side can take action, and what action is safe?

Deployment would also require careful treatment of the operational details surrounding the framework itself. CAPs and CSPs need ways to identify and correlate traffic flows. QoS monitoring must cover enough of the path to find problems where they occur and keep teams from merely shifting blame from one segment to another. Privacy, commercial sensitivity, and regulation will shape what can be shared. The technical framework will have to live inside those constraints.

For the idea to travel beyond a VQEG report, it will also need a standardization path. Parts of the framework may fit naturally in ITU-T Study Group 12, while protocol and system aspects may belong in IETF, 3GPP, MPEG, or related Standards Developing Organizations (SDOs). If that work succeeds, QoE can move from post-session evaluation toward a shared operating language for services and networks.

References

- [VQEG, 2026] P. Pérez, F. Blouin, B. Adsumilli, S. Baldoni, F. Battisti, N. Birkbeck, K. Brunnström, L. M. Contreras, M. Fiedler, J. Folgueira, N. García, E. Halepovic, T. Hoßfeld, T. Karagioules, I. Katsavounidis, K. Koniuch, D. Lindero, S. Nadas, M. Orduna, P. Rojo, A. Raake, R. Rao Ramachandra Rao, W. Robitza, T. Tsou, and M. Zorzi, “VQEG White Paper on Quality of Experience-Aware Management for Collaboration Between Network and Application Providers”, P. Pérez, F. Blouin, eds., Video Quality Experts Group (VQEG), no. VQEG_TR_2026_001, Mar. 2026, doi: 10.66537/OLKA7578.

- [Qualinet, 2013] Qualinet White Paper on Definitions of Quality of Experience (2012). European Network on Quality of Experience in Multimedia Systems and Services (COST Action IC 1003), Patrick Le Callet, Sebastian Möller and Andrew Perkis, eds., Lausanne, Switzerland, Version 1.2, March 2013.

- [3GPP KPI, 2024] 3GPP TS 28.554 V20.1.0 (2026-03). Technical Specification. 3rd Generation Partnership Project; Technical Specification Group Services and System Aspects; Management and orchestration; 5G end to end Key Performance Indicators (KPI) (Release 20)

- [3GPP QMC, 2025] 3GPP TS 28.404 V19.0.0 (2025-09). Technical Specification. 3rd Generation Partnership Project; Technical Specification Group Services and System Aspects; Telecommunication management; Quality of Experience (QoE) measurement collection; Concepts, use cases and requirements (Release 19)

- [Hoßfeld, 2019] Hoßfeld, T., Heegaard, P. E., Skorin-Kapov, L., & Varela, M. (2019, June). Fundamental relationships for deriving QoE in systems. In 2019 Eleventh International Conference on Quality of Multimedia Experience (QoMEX) (pp. 1-6). IEEE.