Introduction

Multimedia content is nowadays omnipresent thanks to technological advancements in the last decades. A major driver of today’s networks are content providers like Netflix and YouTube, which do not deploy their own streaming architecture but provide their service over-the-top (OTT). Interestingly, this streaming approach performs well and adopts the Hypertext Transfer Protocol (HTTP), which has been initially designed for best-effort file transfer and not for real-time multimedia streaming. The assumption of former video streaming research that streaming on top of HTTP/TCP will not work smoothly due to its retransmission delay and throughput variations, has apparently be overcome as supported by [1]. Streaming on top of HTTP, which is currently mainly deployed in the form of progressive download, has several other advantages. The infrastructure deployed for traditional HTTP-based services (e.g., Web sites) can be exploited also for real-time multimedia streaming. Typical problems of real-time multimedia streaming like NAT or firewall traversal do not apply for HTTP streaming. Nevertheless, there are certain disadvantages, such as fluctuating bandwidth conditions, that can not be handled with the progressive download approach, which is a major drawback especially for mobile networks where the bandwidth variations are tremendous. One of the first solutions to overcome the problem of varying bandwidth conditions has been specified within 3GPP as Adaptive HTTP Streaming (AHS) [2]. The basic idea is to encode the media file/stream into different versions (e.g., bitrate, resolution) and chop each version into segments of the same length (e.g., two seconds). The segments are provided on an ordinary Web server and can be downloaded through HTTP GET requests. The adaptation to the bitrate or resolution is done on the client-side for each segment, e.g., the client can switch to a higher bitrate – if bandwidth permits – on a per segment basis. This has several advantages because the client knows best its capabilities, received throughput, and the context of the user. In order to describe the temporal and structural relationships between segments, AHS introduced the so-called Media Presentation Description (MPD). The MPD is a XML document that associates an uniform resource locators (URL) to the different qualities of the media content and the individual segments of each quality. This structure provides the binding of the segments to the bitrate (resolution, etc.) among others (e.g., start time, duration of segments). As a consequence each client will first request the MPD that contains the temporal and structural information for the media content and based on that information it will request the individual segments that fit best for its requirements. Additionally, the industry has deployed several proprietary solutions, e.g., Microsoft Smooth Streaming [3], Apple HTTP Live Streaming [4] and Adobe Dynamic HTTP Streaming [5], which more or less adopt the same approach.

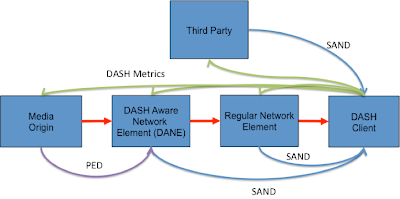

Figure 1: Concept of Dynamic Adaptive Streaming over HTTP.

Recently, ISO/IEC MPEG has ratified Dynamic Adaptive Streaming over HTTP (DASH) [6] an international standard that should enable interoperability among proprietary solutions. The concept of DASH is depicted in Figure 1. The Institute of Information Technology (ITEC) and, in particular, the Multimedia Communication Research Group of the Alpen-Adria-Universität Klagenfurt has participated and contributed from the beginning to this standard. During the standardization process a lot of research tools have been developed for evaluation purposes and scientific contributions including several publications. These tools are provided as open source for the community and are available at [7].

Open Source Tools Suite

Our open source tool suite consists of several components. On the client-side we provide libdash [8] and the DASH plugin for the VLC media player (also available on Android). Additionally, our suite also includes a JavaScript-based client that utilizes the HTML5 media source extensions of the Google Chrome browser to enable DASH playback. Furthermore, we provide several server-side tools such as our DASH dataset, consisting of different movie sequences available in different segment lengths as well as bitrates and resolutions. Additionally, we provide a distributed dataset mirrored at different locations across Europe. Our datasets have been encoded using our DASHEncoder, which is a wrapper tool for x264 and MP4Box. Finally, a DASH online MPD validation service and a DASH implementation over CCN completes our open source tool suite.

libdash

Figure 2: Client-Server DASH Architecture with libdash.

The general architecture of DASH is depicted in Figure 2, where orange represents standardized parts. libdash comprises the MPD parsing and HTTP part. The library provides interfaces for the DASH Streaming Control and the Media Player to access MPDs and downloadable media segments. The download order of such media segments will not be handled by the library. This is left to the DASH Streaming Control, which is an own component in this architecture but it could also be included in the Media Player. In a typical deployment, a DASH server provides segments in several bitrates and resolutions. The client initially receives the MPD through libdash which provides a convenient object-oriented interface to that MPD. Based on that information the client can download individual media segments through libdash at any point in time. Varying bandwidth conditions can be handled by switching to the corresponding quality level at segment boundaries in order to provide a smooth streaming experience. This adaptation is not part of libdash and the DASH standard and will be left to the application which is using libdash.

DASH-JS

Figure 3: Screenshot of DASH-JS.

DASH-JS seamlessly integrates DASH into the Web using the HTML5 video element. A screenshot is shown in Figure 3. It is based on JavaScript and uses the Media Source API of Google’s Chrome browser to present a flexible and potentially browser independent DASH player. DASH-JS is currently using WebM-based media segments and segments based on the ISO Base Media File Format.

DASHEncoder

DASHEncoder is a content generation tool – on top of the open source encoding tool x264 and GPAC’s MP4Box – for DASH video-on-demand content. Using DASHEncoder, the user does not need to encode and multiplex separately each quality level of the final DASH content. Figure 4 depicts the workflow of the DASHEncoder. It generates the desired representations (quality/bitrate levels), fragmented MP4 files, and MPD file based on a given configuration file or by command line parameters.

Figure 4: High-level structure of DASHEncoder.

The set of configuration parameters comprises a wide range of possibilities. For example, DASHEncoder supports different segment sizes, bitrates, resolutions, encoding settings, URLs, etc. The modular implementation of DASHEncoder enables the batch processing of multiple encodings which are finally reassembled within a predefined directory structure represented by single MPD. DASHEncoder is available open source on our Web site as well as on Github, with the aim that other developers will join this project. The content generated with DASHEncoder is compatible with our playback tools.

Datasets

Figure 5: DASH Dataset.

Our DASH dataset comprises multiple full movie length sequences from different genres – animation, sport and movie (c.f. Figure 5) – and is located at our Web site. The DASH dataset is encoded and multiplexed using different segment sizes inspired by commercial products ranging from 2 seconds (i.e., Microsoft Smooth Streaming) to 10 seconds per fragment (i.e., Apple HTTP Streaming) and beyond. In particular, each sequence of the dataset is provided with segments sizes of 1, 2, 4, 6, 10, and 15 seconds. Additionally, we also offer a non-segmented version of the videos and the corresponding MPD for the movies of the animation genre, which allows for byte-range requests. The provided MPDs of the dataset are compatible with the current implementation of the DASH VLC Plugin, libdash, and DASH-JS. Furthermore, we provide a distributed DASH (D-DASH) dataset which is, at the time of writing, replicated on five sites within Europe, i.e., Klagenfurt, Paris, Prague, Torino, and Crete. This allows for a real-world evaluation of DASH clients that perform bitstream switching between multiple sites, e.g., this could be useful as a simulation of the switching between multiple Content Distribution Networks (CDNs).

DASH Online MPD Validation Service

The DASH online MPD validation service implements the conformance software of MPEG-DASH and enables a Web-based validation of MPDs based on a file, URI, and text. As the MPD is based on XML schema, it is also possible to use an external XML schema file for the validation.

DASH over CCN

Finally, the Dynamic Adaptive Streaming over Content Centric Networks (DASC áka DASH over CCN) implements DASH utilizing a CCN naming scheme to identify content segments in a CCN network. Therefore, the CCN concept from Jacobson et al. and the CCNx implementation (www.ccnx.org) of PARC is used. In particular, video segments formatted according to MPEG-DASH are available in different quality levels but instead of HTTP, CCN is used for referencing and delivery.

Conclusion

Our open source tool suite is available to the community with the aim to provide a common ground for research efforts in the area of adaptive media streaming in order to make results comparable with each other. Everyone is invited to join this activity – get involved in and excited about DASH.

Acknowledgments

This work was supported in part by the EC in the context of the ALICANTE (FP7-ICT-248652) and SocialSensor (FP7-ICT-287975) projects and partly performed in the Lakeside Labs research cluster at AAU.

References

[1] Sandvine, “Global Internet Phenomena Report 2H 2012”, Sandvine Intelligent Broadband Networks, 2012. [2] 3GPP TS 26.234, “Transparent end-to-end packet switched streaming service (PSS)”, Protocols and codecs, 2010. [3] A. Zambelli, “IIS Smooth Streaming Technical Overview,” Technical Report, Microsoft Corporation, March 2009. [4] R. Pantos, W. May, “HTTP Live Streaming”, IETF draft, http://tools.ietf.org/html/draft-pantos-http-live-streaming-07 (last access: Feb 2013). [5] Adobe HTTP Dynamic Streaming, http://www.adobe.com/products/httpdynamicstreaming/ (last access: Feb 2013). [6] ISO/IEC 23009-1:2012, Information technology – Dynamic adaptive streaming over HTTP (DASH) – Part 1: Media presentation description and segment formats. Available here [7] ITEC DASH, http://dash.itec.aau.at [8] libdash open git repository, https://github.com/bitmovin/libdash