The 154th MPEG meeting took place in Santa Eulària, Spain, from April 27 to May 1, 2026. The official MPEG press release can be found here. This report highlights key outcomes from the meeting, with a focus on research directions relevant to the ACM SIGMM community:

- Exploration on MPEG Gaussian Splat Coding (GSC)

- Draft Joint Call for Proposals: Video Compression Beyond VVC

- Energy-aware Streaming in MPEG-DASH

- MPEG-AI: Vision and Scenarios for Artificial Intelligence in Multimedia

- MPEG Roadmap

Exploration on MPEG Gaussian Splat Coding (GSC)

The MPEG WG 2 Technical Requirements group — jointly with WG 4 (Video Coding), WG 5 (JVET: Joint Video Coding Team(s) with ITU-T SG 16), and WG 7 (Coding of 3D Graphics and Haptics) — made progress toward standardizing Gaussian Splat Coding (GSC) regarding draft requirements and use cases subject to change. Gaussian splatting, first introduced in a landmark 2023 ACM SIGGRAPH paper by Kerbl et al. [Kerbl2023], represents 3D scenes as collections of anisotropic Gaussian primitives carrying geometry (x, y, z positions) and appearance attributes (opacity, scale, rotation, and spherical harmonics coefficients for view-dependent color), enabling photorealistic novel-view synthesis with real-time rendering. Because raw Gaussian splat data can be extremely large and the ecosystem of proprietary formats (.ply, .splat, .spz, etc.) is fragmented, MPEG has identified a clear need for interoperable, efficient compression standards. Two exploration tracks are currently being pursued: I-3DGS, which operates on Gaussian splats in the well-established “INRIA” format as a symmetric encode/decode pipeline, and A-3DGS, which allows alternative learned representations and training-integrated approaches.

The draft requirements, still evolving, currently cover representation, coding, and system aspects across both tracks, with an additional lightweight profile targeting resource-constrained devices such as mobile phones (Snapdragon 8 Gen 3/Elite) and HMDs (Snapdragon XR Gen2, e.g., Meta Quest 3). Among the coding requirements under consideration are lossy and lossless compression with variable bitrate, spatial and temporal random access, progressive and scalable decoding (quality, Level of Detail (LoD), attribute subsets), and error resilience. Notably, a lightweight profile currently proposes hard complexity constraints (i.e., real-time encode/decode on 2024/2025 mobile hardware, a 2GB runtime memory cap, and at most four concurrent video decoder sessions) reflecting MPEG’s intent to enable a fast-deployment path for interoperable interchange and storage of static Gaussian splat assets. Alongside the requirements, a draft set of 27 use cases has been identified, spanning consumer XR (telepresence, gaming, social media, retail), professional media (movie production, sports broadcasting, immersive journalism), industrial applications (digital twins, Building Information Modeling (BIM), structure inspection, disaster assessment), and emerging hybrid representations such as Gaussian splats attached to deformable meshes for avatar animation and rigging. Several of these use cases are motivating draft requirements around primitive ordering preservation and stable identifier signaling for external metadata associations, though the details of these provisions may still change.

Research aspects: Even at this early draft stage, the direction of MPEG’s GSC work opens a rich set of research opportunities. On the compression side, the dual-track structure raises open questions around rate-distortion-complexity optimization for both geometry-based and video-codec-based pipelines, including temporally coherent coding of dynamic (tracked and non-tracked) Gaussian sequences and attribute-group-aware progressive coding. The QoE angle is equally pressing: no widely accepted perceptual quality metric yet exists for 6DoF Gaussian splat rendering, and the community can contribute splat-artifact-aware metrics, view-consistency measures, and subjective evaluation methodologies. The envisioned lightweight profile points to a need for co-design of decoders and real-time renderers targeting mobile GPU architectures, offering opportunities in GPU-friendly bitstream layouts and LOD-driven streaming. From a systems and networking perspective, the spatial and temporal random-access provisions, combined with the breadth of use cases demanding adaptive streaming to diverse devices (HMDs, phones, TVs, browsers), map naturally onto adaptive bitrate research, ROI- and view-dependent segment delivery, and loss-resilient transmission of splat parameters. Finally, the emerging use cases around hybrid mesh-Gaussian avatars, scene editing, and semantic metadata associations introduce new multimedia content management and interactive media challenges that go well beyond traditional video streaming and are squarely within the scope of ACM SIGMM’s research community.

Draft Joint Call for Proposals: Video Compression Beyond VVC

MPEG’s Joint Video Experts Team (JVET) — operating jointly under ITU-T SG21 and ISO/IEC JTC 1/SC 29 — advanced a draft Joint Call for Proposals (CfP) for a new generation of video compression technology with capabilities that would substantially exceed those of the current Versatile Video Coding (VVC) standard (Rec. ITU-T H.266 | ISO/IEC 23090-3). The final CfP is planned for July 2026, with proposal submissions evaluated at a JVET meeting in January 2027 and a tentative target of a completed standard by October 2029. The overarching goal is to solicit compression technology that significantly improves upon VVC’s Main 10 Profile in terms of rate-distortion performance, encoder/decoder implementability, applicability to diverse content types, and additional features such as low latency, error robustness, and scalability, while explicitly recognizing that practical fast encoding is increasingly important across a growing range of applications.

The draft CfP defines four test cases. The primary test case targets improved compression without runtime constraints, spanning several content categories: SDR random-access at UHD/4K and HD resolutions, SDR low-delay HD (targeting conversational and gaming applications), HDR content under both PQ and HLG transfer functions at UHD, gaming low-delay HD, and user-generated content. Three additional test cases impose encoder runtime constraints relative to the VVC Test Model (VTM) reference encoder, enabling JVET to characterize the compression-versus-speed trade-off across submissions. Formal subjective evaluation will follow the degradation category rating (DCR) methodology per ITU-R BT.500. Importantly, the CfP explicitly addresses neural and learned components: proponents must disclose what training data was used and are prohibited from using any test sequence as training material, and source code (incl. training scripts or parameter derivation procedures) must be made available for accepted technologies entering the core experiments process. The draft notes that specific test sequences and target bitrates may still change before the final CfP is issued.

Research aspects: The runtime-constrained test cases create a natural framework for studying the compression-complexity Pareto frontier for both classical and learned codecs. The inclusion of user-generated content and gaming video as distinct categories invites research into content-adaptive coding tools and perceptual quality metrics tailored to these sources, as does the HDR coverage with its use of weighted PSNR alongside MS-SSIM. The explicit allowance for neural and learned components, with mandatory training data disclosure and source code requirements, signals that JVET anticipates hybrid and end-to-end learned codecs as serious contenders, making codec-agnostic adaptive streaming, QoE modeling for learned video codecs, and large-scale perceptual quality benchmarking timely topics for the ACM SIGMM community.

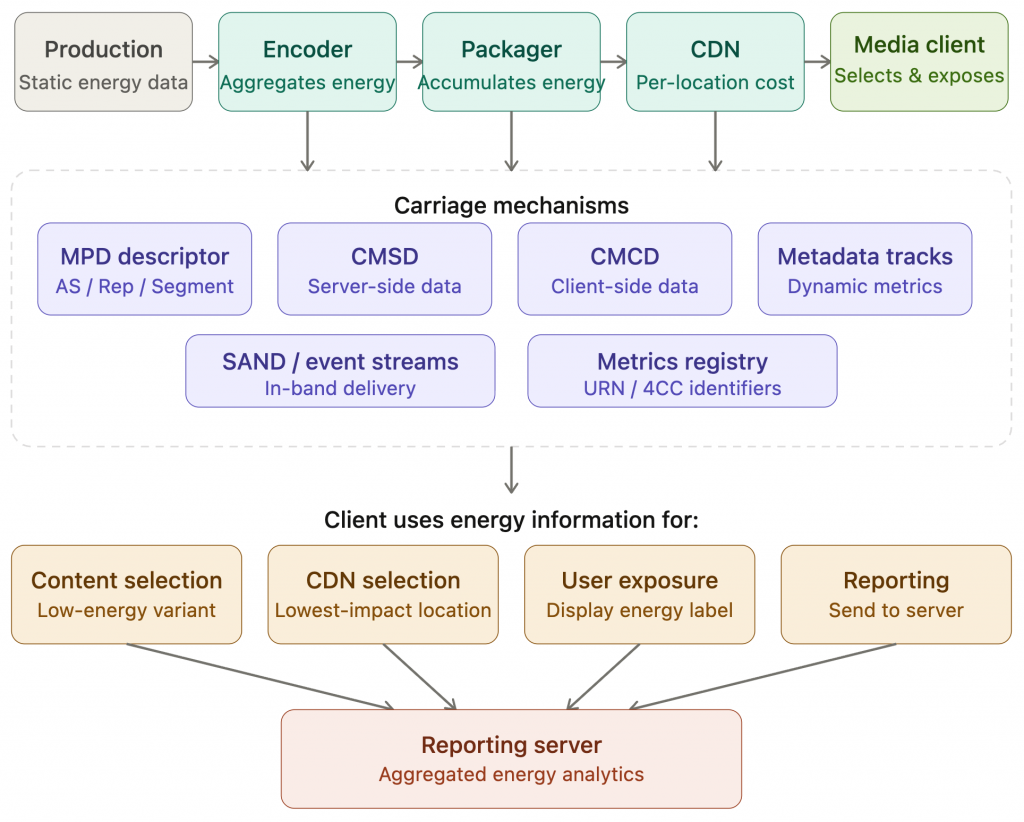

Energy-aware Streaming in MPEG-DASH

MPEG’s WG 3 (Systems/DASH) is developing a framework for integrating energy-related information into adaptive streaming workflows, currently documented as a Technology under Consideration (TuC) in the DASH specification. The proposed framework treats energy as a first-class design metric alongside QoE, latency, and throughput, and defines an end-to-end approach for assigning, aggregating, and propagating energy consumption data across the entire media delivery chain — from production and encoding through CDN distribution to the client. A key design principle is extensibility: rather than hardcoding specific metrics, the framework proposes a common registry of energy-related metrics (such as energy indices or carbon indices) identified via URNs or 4CC codes, inspired by existing registries like MP4RA and DASH-IF. Energy information may be carried through a variety of existing DASH mechanisms, including MPD descriptors at multiple granularity levels (Adaptation Set, Representation, Segment, Service Location), CMCD/CMSD extensions, metadata tracks, SAND messages, and event streams. A dedicated Energy descriptor in the MPD is proposed, analogous to existing Accessibility descriptors, to expose energy information to clients and applications for representation selection, user exposure, and reporting to back-end servers.

The April 2026 update reported significant progress on two related fronts. A 5G-MAG workshop co-organized with 3GPP SA4 and Greening of Streaming (March 2026) highlighted growing industry consensus around practical energy measurement, surfacing findings such as the dominant role of device eco-mode settings and content brightness over codec or resolution choices in determining end-device energy consumption, and the challenge of reproducible cloud-based energy measurement. In parallel, 3GPP’s Rel-20 study on media energy consumption exposure (FS_Energy_Ph2_MED) reached 80% completion and is expected to conclude in June 2026, with normative work to follow. Notably, 3GPP’s current draft conclusions focus on generic architectural enablers, specifically a new Energy Information Application Function, while explicitly deferring media-layer and client-driven energy optimization to external bodies such as MPEG, SVTA, and DVB. This positions MPEG-DASH’s manifest-based energy signaling work as the natural venue for maturing the streaming-level mechanisms that 3GPP may later reference.

Research aspects: This work opens several timely directions. Energy-aware ABR algorithm design, i.e., jointly optimizing QoE and energy across representation selection, CDN choice, and client device settings, is a natural extension of the existing adaptive streaming research agenda. The proposed metrics registry and MPD-level signaling create opportunities for dataset construction and benchmarking, building on emerging open datasets such as COCONUT [Tashtarian2024] and VEED [Linder2024]. The finding that device-side factors (eco-mode, display brightness) dominate energy consumption over codec and bitrate choices challenges some common assumptions and calls for more holistic QoE-energy modeling. Finally, the cross-SDO coordination between MPEG, 3GPP, IETF (GREEN working group), and Greening of Streaming presents opportunities for the ACM SIGMM community to contribute to the design of interoperable, standardized energy reporting APIs for streaming services.

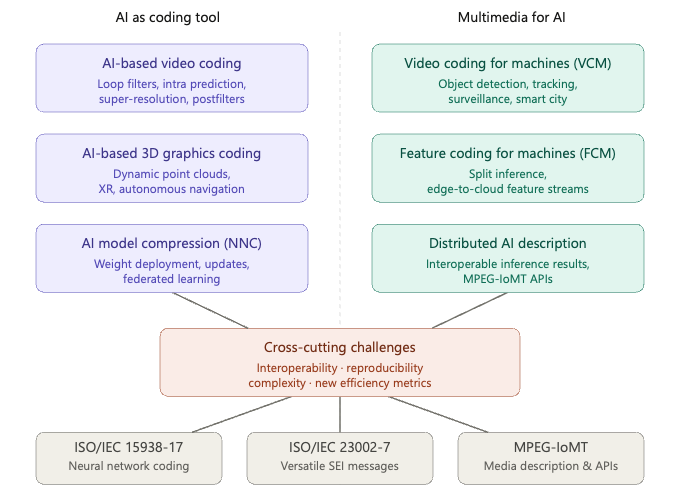

MPEG-AI: Vision and Scenarios for Artificial Intelligence in Multimedia

The first edition of ISO/IEC TR 23888-1 serves as the foundational vision document for the MPEG-AI series (ISO/IEC 23888). The document maps out how AI and neural network technologies interact with multimedia standardization along two complementary axes: (i) AI as a multimedia coding tool (e.g., AI-based video compression, 3D point cloud coding) and (ii) multimedia as input for AI consumption (e.g., video coding optimized for machine vision tasks). Under this umbrella, the document surveys six technical areas. In AI-based video coding, neural network components are explored as hybrid additions to VVC-style codecs, covering in-loop filters, intra prediction, super-resolution via reference picture resampling, and content-adaptive postfilters transmitted via SEI messages using the Neural Network Coding standard (NNC, ISO/IEC 15938-17). In AI-based 3D graphics coding, the focus is on dynamic point clouds for immersive (XR, gaming) and machine-oriented (autonomous navigation, BIM) applications, where sparsity and geometric irregularity pose unique challenges beyond those faced by image/video AI codecs. AI model compression (NNC) addresses the bandwidth-efficient deployment and incremental updating of neural network weights to devices, with use cases ranging from adaptive streaming ABR models to federated learning and postfilter delivery. Video coding for machines (VCM) targets compression optimized for downstream AI tasks such as object detection, tracking, and content moderation, with applications in surveillance, intelligent transportation, smart cities, and industrial inspection. Feature coding for machines (FCM) extends this to split-inference architectures where intermediate feature maps — rather than reconstructed video — are compressed and transmitted between edge devices and servers. Finally, distributed AI media description addresses the interoperable representation and API-level exchange of AI inference results (e.g., bounding boxes, segmentation masks) between networked media analyzers, as specified in the MPEG-IoMT suite.

Research aspects: The hybrid codec paradigm raises open questions around joint optimization of traditional and learned tools and complexity-aware training for mobile targets. The VCM and FCM tracks call for new task-oriented quality metrics capturing machine-task performance as a function of bitrate, an area where the multimedia and computer vision communities can collaborate. The split-inference and feature coding scenarios introduce latency-constrained compression problems for edge-to-cloud pipelines, which naturally connect to adaptive streaming and IoT research. Finally, the reproducibility and bit-exactness challenges highlighted in the document — hardware-dependent inference, non-deterministic training, and the absence of standardized evaluation environments — present an opportunity for the community to develop shared benchmarking infrastructure for learned multimedia codecs.

MPEG Roadmap

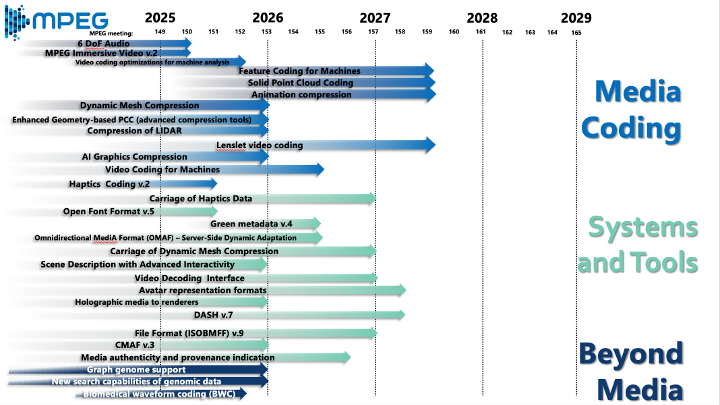

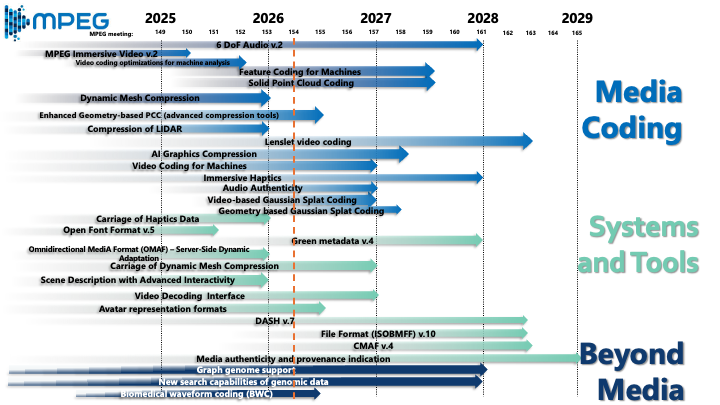

MPEG released an updated roadmap at its 154th meeting, reflecting the current status and near-term trajectory of its standardization activities across three broad pillars. Under Media Coding, work nearing completion includes MPEG Immersive Video v.2, Feature Coding for Machines, Solid Point Cloud Coding, and Dynamic Mesh Compression, while longer-horizon efforts cover AI Graphics Compression, Video Coding for Machines, Lenslet video coding, and — directly relevant to this report — both Video-based and Geometry-based Gaussian Splat Coding tracks. Under Systems and Tools, near-term deliverables include DASH v.7, Green metadata v.4, and Carriage of Haptics Data, with CMAF v.4 and File Format (ISOBMFF) v.10 on a slightly longer timeline. The Beyond Media pillar continues to advance genomic data search and biomedical waveform coding (BWC), alongside media authenticity and provenance indication — underscoring MPEG’s expanding scope well beyond traditional audiovisual applications.

Research aspects: The roadmap highlights several intersecting research opportunities. The convergence of volumetric and neural representations (i.e., point clouds, dynamic meshes, Gaussian splats, and lenslet video; all progressing in parallel) raises open questions around unified rate-distortion frameworks and cross-format QoE evaluation for 6DoF experiences. The simultaneous progression of Video Coding for Machines and Feature Coding for Machines alongside traditional human-centric codecs calls for research into adaptive pipelines that can serve both human and machine consumers from a shared bitstream. The Green metadata track connects directly to the energy-aware streaming work discussed above, underscoring the need for end-to-end energy modeling that spans codec choice, packaging, delivery, and consumption. Finally, the Beyond Media thread (e.g., particularly genomic data and biomedical waveforms) signals an expanding definition of “multimedia” that the ACM SIGMM community may wish to engage with as compression, retrieval, and QoE methods developed for audiovisual content find applicability in life sciences.

Concluding Remarks

The 154th MPEG meeting in Santa Eularia reflects a standards body in active transition, broadening its scope from traditional audiovisual compression toward a richer landscape that encompasses neural scene representations, AI-native codecs, energy-aware delivery, and even biomedical data. The Gaussian Splat Coding exploration, the next-generation video compression Call for Proposals, the MPEG-AI vision document, and the energy-aware streaming framework each address distinct but interconnected challenges: how to represent, compress, deliver, and consume increasingly complex and diverse media efficiently and sustainably. For the ACM SIGMM community, this meeting offers both a map of where industry standardization is heading and a set of open research problems (i.e., spanning perceptual quality assessment, learned compression, edge inference, green streaming, and immersive media delivery) where academic contributions can meaningfully shape the next generation of multimedia standards.

The 155th MPEG meeting will be held in Geneva, Switzerland, from July 13 to 17, 2026. Click here for more information about MPEG meetings and ongoing developments.

References

- [Kerbl, 2023] Bernhard Kerbl, Georgios Kopanas, Thomas Leimkuehler, and George Drettakis. 2023. 3D Gaussian Splatting for Real-Time Radiance Field Rendering. ACM Trans. Graph. 42, 4, Article 139 (August 2023), 14 pages. https://doi.org/10.1145/3592433

- [Tashtarian, 2024] Farzad Tashtarian, Daniele Lorenzi, Hadi Amirpour, Samira Afzal, and Christian Timmerer. 2024. COCONUT: Content Consumption Energy Measurement Dataset for Adaptive Video Streaming. In Proceedings of the 15th ACM Multimedia Systems Conference (MMSys ’24). Association for Computing Machinery, New York, NY, USA, 346–352. https://doi.org/10.1145/3625468.3652179

- [Linder, 2024] Sandro Linder, Samira Afzal, Christian Bauer, Hadi Amirpour, Radu Prodan, and Christian Timmerer. 2024. VEED: Video Encoding Energy and CO2 Emissions Dataset for AWS EC2 instances. In Proceedings of the 15th ACM Multimedia Systems Conference (MMSys ’24). Association for Computing Machinery, New York, NY, USA, 332–338. https://doi.org/10.1145/3625468.3652178